Introduction

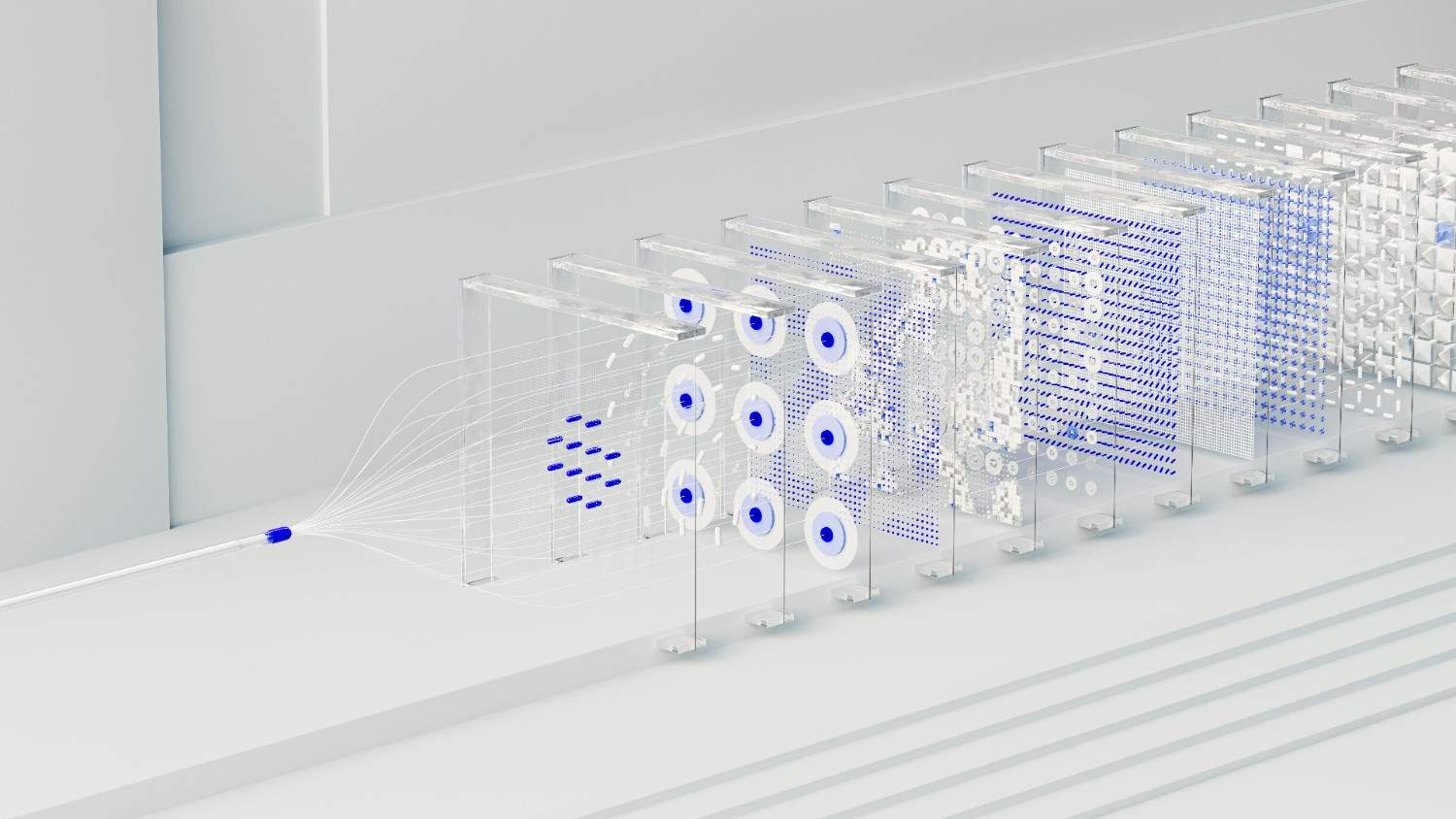

Artificial intelligence is no longer a peripheral experiment — it has become a critical investment reshaping industries globally. Before a single rack gets mounted, understanding why AI Data center workloads demand fundamentally different infrastructure separates successful builds from costly missteps.

Most practitioners underestimate how machine learning and deep learning operations simultaneously spike computational power, energy, and cooling demands. Unlike traditional IT operations, these systems require purpose-built environments engineered from day one to meet efficiency, reliability, and extreme performance demands.

A well-informed approach ensures your data center becomes a long-term asset — not an operational liability. Aligning business goals with scalable, strategic design decisions early means every infrastructure choice directly drives innovation and delivers measurable outcomes worth sustaining.

Understanding the Unique Needs of AI Workloads

Planning Your AI Data Center

AI data centers demand a fundamentally different planning approach than conventional facilities. Where traditional infrastructure prioritizes modest throughput, AI workloads generate extraordinary heat and require tightly integrated power delivery — making both cooling and power delivery major design factors from day one. Overlooking these dependencies creates compounding operational failures that no retrofit can easily fix.

From my experience evaluating high-performance computing systems, the real complexity lies not in selecting GPUs or TPUs alone, but in architecting how advanced technologies coexist under peak workloads. Redundant power systems, paired with uninterruptible power supplies and backup generators, directly determine uptime — and in AI-backed initiatives, even minutes of interruptions translate into significant financial and competitive loss.

Efficient cooling systems aren’t passive infrastructure — they’re performance enablers. Facilities deploying liquid cooling alongside hot/cold aisle containment and chilled water systems consistently report improved hardware longevity and reduced operational costs. Managing temperature and energy consumption intelligently, including tapping renewable energy sources like solar and wind, aligns sustainability targets with long-term operational stability and scalable resource planning.

AI Data Center Infrastructure Requirements

Selecting High-Performance Hardware

Choosing the right hardware for an AI data center starts well before racking a single server. From firsthand experience deploying high-performance computing environments, GPUs remain the backbone of immense processing power, but pairing them with fast storage systems is what separates functional builds from truly efficient ones. Every component must address the unique requirements of demanding AI workloads without compromise.

What engineers often overlook is that robust cooling systems aren’t secondary—they’re load-bearing decisions. Substantial heat generated by cutting-edge processors can throttle performance before a model even completes a training run. Building around specialized hardware means accounting for considerable energy draw from day one, so power requirements must be scoped alongside compute, not after it.

Dense server racks configured with low-latency network architecture create conditions where massive computational power flows uninterrupted. Proper, efficient airflow management inside the facility extends component lifespan considerably. Future scalability depends on these early structural choices—increasing requirements tied to AI adoption will expose any undersized rack configuration or undersized cooling capacity long before a second expansion phase begins.

Designing Efficient Network Architecture

Optimize Network Architecture

Most engineers I’ve worked with approach network architecture backward — they select advanced networking equipment before confirming whether their high-bandwidth connections can sustain parallel processing demands from clustered GPUs. Starting from actual workloads reveals something crucial: seamless data transfers collapse without proper rack layout and cabling discipline that supports the physical throughput path end-to-end.

Low-latency performance inside dense compute clusters depends heavily on how bottlenecks are identified during early design phases. In my experience, 100Gbps Ethernet networks reduce congestion across high-speed SSDs feeding active inference jobs. Overlooking power distribution topology here directly degrades operational efficiency, since even minor voltage imbalances across interconnected nodes create cascading throughput degradation across interconnected infrastructure.

NVMe interconnects pushing non-volatile memory express data streams deserve purpose-built switching logic rather than repurposed general networking gear. Binding fast NVMe storage paths to dedicated fabric minimizes contention, sustaining quick data access across large-scale datasets. Treat every segment — from high-capacity memory buses down to inter-rack fiber — as a deterministic performance contract, not an assumption.

Power and Cooling Considerations

Step 3: Secure Sufficient Power Capacity

Every AI data center I’ve worked with hits the same wall early: power constraints that no amount of scalable hardware solutions can overcome retroactively. Before deploying dense compute clusters or GPU-accelerated servers, you need reliable power supply systems ensuring stable power delivery across every rack. Securing utility agreements and backup generation isn’t optional — it’s foundational to sustained operational efficiency.

Implement Advanced Cooling System

What most planners underestimate is that substantial heat generated by high-intensity machine learning models accumulates faster than traditional ventilation systems. Liquid cooling and immersion cooling have moved from experimental to essential, particularly for facilities running complex algorithms against massive datasets. Direct-to-chip solutions, in my observation, consistently outperform legacy traditional cooling methods across both energy consumption and hardware longevity metrics.

Thermal architecture deserves the same engineering rigor as compute planning. Poorly managed cooling strategies create downtime that disrupts data processing pipelines and compromises consistent performance. Facilities integrating AI-driven monitoring into their thermal management detect anomalies before failures cascade. Real-time thermal telemetry, paired with advanced monitoring tools, transforms reactive cooling into predictive infrastructure that scales with growing AI workloads.

Scalability and Flexibility

Plan for Scalability and Future Growth

Modular data center designs resist the trap of rigid overbuilding — experienced operators understand that scalability baked into initial infrastructure determines long-term cost-effective expansion. Rather than reacting to AI adoption surges, modular architectures enable incremental capacity deployment, preventing disruptions while supporting everything from small-scale AI applications to enterprise-level machine learning models with remarkable operational flexibility.

Edge computing fundamentally reshapes how future-ready growth gets planned — localized processing slashes latency while boosting network efficiency. A hybrid approach bridges centralized compute with distributed scalability, positioning infrastructure as strategic assets aligned with 2025 demands. Smart operators treating sustainability and expandability as unified priorities consistently achieve sustainable operations while staying ahead of regulatory standards and environmental standards governing AI data center design.

Security and Monitoring

Deploying robust security protocols inside an AI data center isn’t simply about deploying firewalls — it’s about weaving multi-layered cybersecurity measures across every operational layer. Drawing from hands-on infrastructure work, encryption and identity management systems must operate in tandem to ensure GDPR, HIPAA, and broader regulatory compliance standards are met without sacrificing operational efficiency or system reliability.

Real-time energy management systems paired with intelligent monitoring help detect anomalies before they escalate into downtime. Effective oversight of power usage across dense server racks also supports sustainable operations. When AI-backed initiatives incorporate behavioral monitoring into the data flow, teams gain measurable performance visibility — fortifying both security posture and the long-term flexibility of the entire infrastructure.

Define Your Data Center Goals

Before touching hardware, businesses must confront a sharper question: what specific business outcomes does this AI data center exist to serve? From data analytics to large-scale machine learning models, each objective reshapes every downstream decision — network architecture, hardware density, and cost-effective deployment strategies demand clarity at this stage.

Experienced practitioners understand that AI workloads behave nothing like traditional IT operations — their unique requirements around immense processing power, future scalability, and low-latency network architecture cannot be retrofitted after build-out. Defining goals early means your infrastructure aligns with operational workflows, reduces drift, and positions the facility for real-time analytics without costly redesigns.

Choose the Right Location

Seasoned engineers rarely lead with server specs — they start with location. Reliable power sources, regulatory compliance, and tax incentives quietly determine whether an AI facility thrives or hemorrhages capital before a single model trains.

Climate conditions directly shape cooling requirements and operational costs, while proximity to skilled labor and renewable energy — wind, solar — aligns energy efficiency with business objectives, cutting latency across your network infrastructure.

Measuring Business Impact of AI Data Centers

AI data centers have redefined how organizations quantify returns on investment. Beyond raw infrastructure costs, measurable outcomes now anchor every strategic investment decision. From accelerating innovation to compressing product development cycles, these facilities generate AI-led insights that expose untapped opportunities, enabling leadership to streamline processes and align operational efficiency with organizational objectives at unprecedented scale.

Scalable solutions built around advanced AI models eliminate costly infrastructure overhauls while processing large datasets at unparalleled speeds. Automation slashes the time to market, delivering lower operational costs and measurable cost savings. Customer engagement strengthens when operational processes serve strategic initiatives purposefully. Ultimately, scalability empowers organizations to innovate, satisfy stakeholders, and secure long-term value against evolving future business needs with precise insights.

AI Data Center Sustainability and Scalability in 2026

In 2026, AI data center design firmly prioritizes sustainability as a core operational commitment. Renewable energy sources—solar, wind, and hydroelectric power—anchor energy efficiency strategies, dramatically reducing ecological footprint while satisfying surging energy requirements across global industries and infrastructure.

Scalability demands operators move well beyond static builds. Modular designs allow compute racks, storage nodes, and networking equipment to expand without disrupting existing operations. Cloud-native platforms with hybrid cloud strategies deliver agility to absorb increased workloads from future AI technologies.

Edge computing frameworks cut latency by enabling localized AI applications near data sources. This hybrid approach directly supports sustainability goals, reducing energy consumption and operational costs while improving network efficiency for small-scale AI applications and enterprise-level machine learning models globally.

Aligning with regulatory standards and environmental standards elevates data centers into true strategic assets for broader AI adoption. Automation powered by AI-led insights strengthens sustainable operations, improving efficiency and cost management while ensuring future-ready growth across global artificial intelligence infrastructure.

FAQ’s

What Is the Purpose of an AI Data Center?

An AI data center exists to handle the massive computational power required by machine learning models and large-scale model training. Unlike standard facilities, its purpose centers on processing speed, high-performance infrastructure, and scalable design that fuels AI initiatives across industries, enabling faster time to value and broader business innovation simultaneously.

At its core, this specialized facility drives AI applications from predictive analytics to real-time operations, supporting energy-efficient systems with robust cooling solutions. It addresses sustainability standards and environmental responsibility, ensuring operational efficiency while meeting future growth demands. I’ve directly seen how clear goals and strategic value define every successful deployment.

How Does an AI Data Center Differ from a Traditional One?

Traditional data centers handle routine data processing, but AI data centers are engineered for high-intensity machine learning models and massive datasets. They rely on dense server racks, scalable power, and efficient airflow management. Redundancy matters deeply here — high-energy processing demands push infrastructure beyond what any legacy system can sustain.

Unlike conventional setups, AI data centers integrate graphics processing units, tensor processing units, and high-capacity memory to accelerate model training and data analysis. Fast storage systems powered by NVMe solid-state drives eliminate bottlenecks, while ultra-low latency networks enable seamless processing — making modern innovation and advanced automation truly essential, not optional.

How Do Advanced Cooling Systems Benefit AI Data Centers?

Managing heat dissipation effectively separates high-performing facilities from struggling ones. Robust cooling systems directly influence operational performance, preventing thermal throttling across high-performance computing hardware. From my experience overseeing dense GPU clusters, inadequate cooling silently degrades computational workloads before failures appear. Modern AI workloads generate extraordinary thermal loads that traditional approaches simply cannot optimize operations around efficiently.

Advanced cooling technologies enable facilities to sustain significant energy resources without compromising cooling efficiency under pressure. Cooler regions naturally reduce mechanical cooling overhead, but precision liquid cooling inside racks handles high-intensity AI workloads where ambient solutions fail. Smart infrastructure integrating sensors and software-defined networking dynamically adjusts thermal responses, protecting complex models during continuous intensive computations while extending hardware longevity meaningfully.

Why Is Scalability Important for AI Data Centers?

Scalability and Flexibility shape how AI data centers survive evolving demands. From current requirements to future requirements, infrastructure must accommodate growth without complete overhauls. Flexibility in layout ensures seamless adaptation to emerging processing standards, preventing costly rebuilds. Organizations that build scalable foundations today avoid operational bottlenecks tomorrow, sustaining competitive advantage efficiently.

Unlike traditional deployments, scalable AI data centers optimize operations through modular expansion. High-speed storage, paired with strong network connectivity, helps reduce latency and improve efficiency in specific applications. Meeting increasing requirements while managing power requirements demands the right location and architecture — drive innovation remains impossible without infrastructure designed to scale intelligently.

Conclusion

Building an AI data center demands more than just strategic investment — it truly requires foresight. From selecting reliable power sources to deploying specialized hardware like GPUs and TPUs, each decision shapes long-term success. The foundation lies in aligning infrastructure investments with business objectives, ensuring every layer delivers tangible business value.

Sustainability isn’t optional — it’s a priority. Prioritizing energy efficiency, renewable energy sources, and immersion cooling reduces both operational costs and ecological footprint. As AI adoption accelerates across industries, facilities must balance power capacity with sustainability goals. Thoughtful AI data center design positions these systems as strategic assets for future-ready growth.

Scalability truly defines a well-built facility. Deploying edge computing with localized processing reduces latency while high-bandwidth networks support machine learning inference across growing workloads. Redundant connections ensure reliability under peak usage. Ultimately, AI technology that scales intelligently delivers stronger returns on investments and sustains cost efficiency throughout its operational lifecycle.

One Comment